What is data federation

Data Federation is an advanced data management technique that allows an organization to aggregate and manage data from multiple sources without needing to physically merge these sources into a single location. This approach enables businesses to view and analyze consolidated data in real-time, enhancing decision-making and operational efficiency. Data federation allows for accessing and querying data from different sources as if they were stored in a single database without physically moving or copying the data. This capability is especially crucial in today's fast-paced business environments, where access to timely and accurate information is a key competitive advantage.

By leveraging data federation technologies, organizations can create a unified data layer that abstracts the underlying complexity of diverse data models and formats. This makes it easier for users to access and analyze critical data across various systems, including on-premises databases, cloud storage, and even big data platforms, without the need for time-consuming ETL (Extract, Transform, Load) processes.

Data federation aggregates data from multiple sources without physically moving or copying data.

Key concepts and components of data federation

The ability of data federation to provide a unified data view from multiple disparate sources relies on several foundational concepts and components. Understanding these elements is crucial for effectively implementing and leveraging data federation technologies.

Data Virtualization

Data virtualization is a key process in data federation that enables real-time or near-real-time access to data across various sources without requiring physical integration. Data virtualization creates a virtual layer that abstracts the underlying technical details of each data source, presenting users with a single, integrated view. This approach facilitates agile data access and analysis, significantly reducing the time and resources required for data preparation.

Data virtualization is powered by sophisticated algorithms that can interpret and transform different data formats and structures on the fly. This capability is essential for organizations dealing with diverse data environments, including traditional databases, big data platforms, and cloud-based storage systems.

Data Sources and Heterogeneity

The diversity of data sources and their inherent heterogeneity pose significant challenges in data management. Data federation addresses these challenges by harmonizing data from structured databases, semi-structured files (like XML and JSON), and unstructured data (such as text documents and emails). The ability to seamlessly integrate this wide range of data types underlines the versatility of data federation solutions.

Managing this heterogeneity requires robust mechanisms for data discovery, schema mapping, and data transformation, ensuring that the federated data view is coherent and useful for analysis.

Metadata Management

Metadata management is a critical component of data federation, that provides the necessary context for data discovery, integration, and governance. Metadata in a federated environment includes information about the data source, structure, semantics, and access policies. Effective metadata management facilitates efficient data search and retrieval, enhances data quality, and supports compliance with data governance standards.

Metadata also plays a pivotal role in optimizing query performance across federated sources. By maintaining detailed metadata, data federation systems can intelligently route queries to the appropriate sources and apply necessary transformations, ensuring that users receive accurate and timely information.

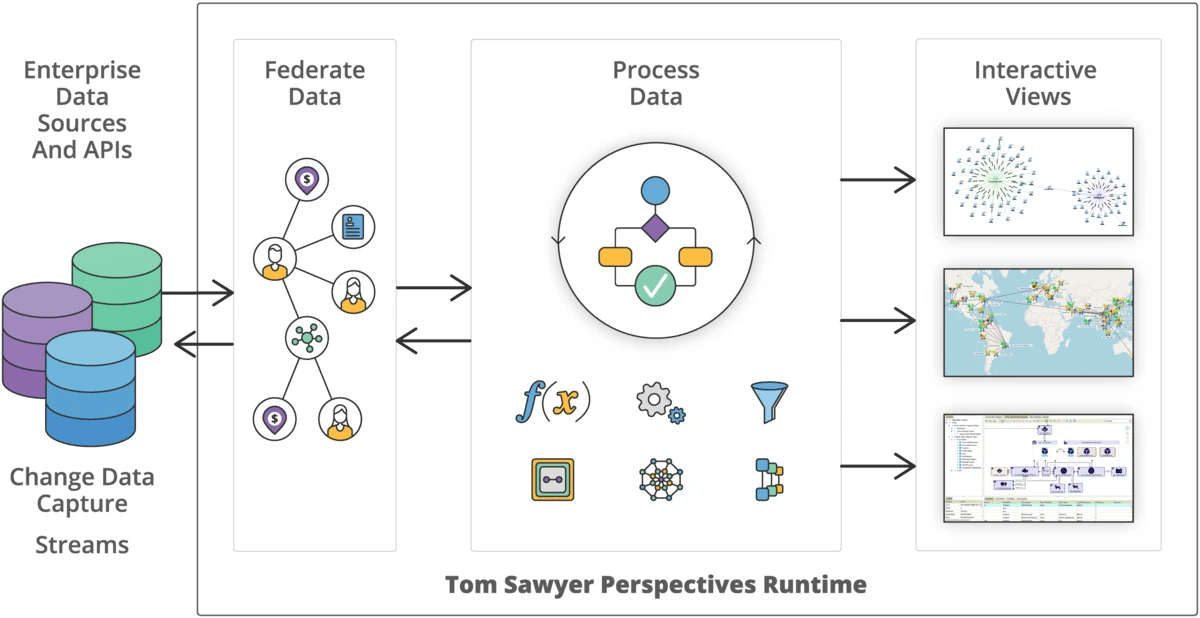

Data federation with Tom Sawyer Perspectives

Our mature, data-agnostic graph platform, Perspectives, includes data federation capabilities that combine data residing in different data sources and with different data structures providing enterprises with real-time access to a unified view of their data.

The process of data federation involves connecting to data sources using provided integrators, extracting the schema to understand the structure of the data, and binding the data source and the schema. This procedure is essential for achieving the flexibility and efficiency that data federation enables.

Tom Sawyer Perspectives is data agnostic, supporting a wide range of data sources, including:

- Graph databases, such as Neo4j, Amazon Neptune and Neptune Analytics openCypher, and Kuzu

- Graph databases supported by the Apache TinkerPop framework, such as Amazon Neptune Gremlin, Microsoft Azure Cosmos DB, JanusGraph, OrientDB, and TinkerGraph

- Microsoft Excel

- MongoDB databases

- JSON files

- RDF sources, such as Oracle, Neptune, Stardog, AllegroGraph, QLever, and MarkLogic databases and RDF-formatted files

- RESTful API servers

- SQL JDBC-compliant databases, including Microsoft SQL Server, MySQL, Oracle, and Postgres

- Structured text documents

- XML

This versatility ensures that organizations can leverage data federation across data residing in different data sources and with different data structures providing them with real-time access to a unified view of the data. Data federation enhances their data management capabilities and addresses the data silo issue.

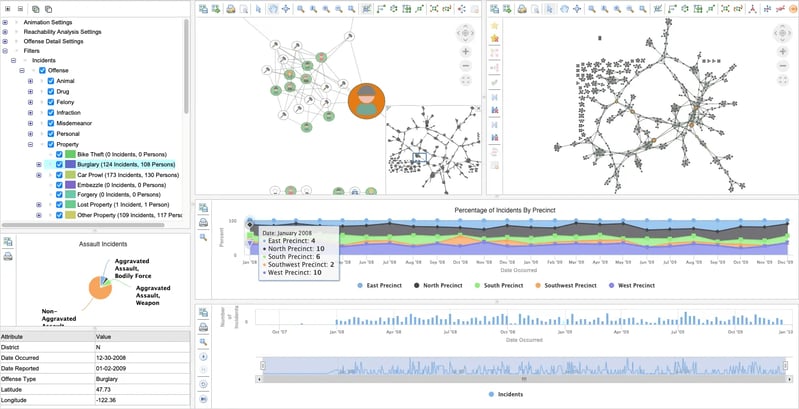

And we don't stop there. Perspectives can reveal valuable insights in federated data through powerful visualizations and analysis.

Benefits of federating data

Modern enterprises struggle to manage their data stores efficiently and cost-effectively, and in many cases must continue to maintain legacy systems that use older data storage technology. The problem is compounded by the need for businesses to understand and utilize the data contained in these various systems to their competitive advantage.

Uncovering the important data in these data silos requires purpose-built techniques and tools to enable efficient and improved decision-making.

Data federation has become indispensable for organizations seeking to harness the power of their collective data assets. The benefits of data federation extend far beyond data consolidation, touching every aspect of an organization's operations and strategic decision-making. Here's why data federation is crucial for organizations navigating the complexities of the modern data-driven world of fast-growing data and data silos:

Informed decision-making

Having a consolidated view of data from different systems provides a single source of truth for accurate decision-making.

This comprehensive insight enables businesses to make more informed choices, driving strategic initiatives and operational improvements.

New insights exposed

With federated data, you can apply specialized visualization and analysis techniques to expose interesting and previously unseen results.

This approach provides a holistic view across the entire enterprise for a more accurate and enhanced view.

Increased productivity

Data consumers have a single source of truth to consult, enabling them to make mission critical decisions quickly and accurately.

This accessibility significantly boosts productivity, allowing users to focus on analysis rather than data collection.

Improved data agility

Eliminating latency concerns and the need for data migration or synchronization, data can be accessed and queried in real-time.

This improvement in data agility and flexibility is crucial for organizations that need to respond rapidly to changing market conditions or internal demands.

Cost- and time-savings

Data federation reduces the dependency on IT teams to develop custom data integrations or move data around, leading to significant cost- and time-savings.

Organizations can allocate these resources to more strategic initiatives, enhancing overall efficiency and competitiveness.

Eliminate data redundancy

Unlike traditional data integration methods that often require moving or copying data to a central data lake or data warehouse, data federation allows data to remain in its source systems.

This approach minimizes redundancy, reduces storage costs, and simplifies data management.

Real-time data access

Data federation facilitates instant access to the latest data across the enterprise, enabling real-time analytics and timely decision-making.

It also offers enhanced responsiveness so organizations can quickly respond to internal and external developments, gaining a competitive edge.

Flexibility and scalability

Data federation offers exceptional flexibility and scalability, accommodating the evolving data landscape of an organization without significant restructuring or investment.

It seamlessly integrates new data sources and scales to handle increasing data volumes and complexity.

Enhanced data quality and governance

By centralizing access to data through a virtual layer, data federation facilitates better data quality management and governance practices.

It provides tools for monitoring, cleaning, and securing data across the organization, ensuring compliance with standards and regulations.

Data federation is easy with Perspectives

One of the standout advantages of data federation is its simplicity and adaptability, regardless of the underlying data storage or format. This ease of data federation and integration becomes particularly evident when using Perspectives, which streamlines the process across a variety of data sources.

Perspectives simplifies the federation process whether you are dealing with a traditional relational database that organizes data into predefined tables, a graph database that emphasizes the relationships between data points, or RESTful APIs that access data over the web.

Here's how Perspectives enhances ease of use in diverse data environments:

Relational Databases

For organizations relying on structured data stored in SQL databases, Perspectives facilitates seamless integration by mapping table schemas into a unified virtual schema. This process allows users to query and manipulate data across multiple relational databases as if they were interacting with a single database.

Graph Databases

In cases where data relationships are as crucial as the data itself, such as Neo4j, Amazon Neptune, or Kuzu, Perspectives can virtualize these connections. By doing so, it offers a straightforward way to incorporate complex, relationship-driven insights into the federated data model without the need for extensive custom coding or transformation efforts.

RESTful APIs

For data that is accessed via APIs, such as web services or cloud-based platforms, Perspectives can abstract the API layer. This enables direct queries and integrations into the federated model, making external data sources as accessible as internal databases.

Broad Data Source Compatibility

Perspectives is designed to be data agnostic, supporting a wide range of data sources beyond those mentioned. This includes, but is not limited to, Microsoft Excel, MongoDB, JSON files, RDF sources, and SQL JDBC-compliant databases. This versatility ensures that organizations can leverage the full spectrum of their data assets, regardless of where or how the data is stored.

Streamlined Integration Process

The integration process with Perspectives involves connecting to the data sources using provided integrators, extracting the schema to understand the data structure, and then binding the data source with the schema. This method reduces the complexity and time required for integrating disparate data sources, enabling more agile data management and analysis practices.

By significantly lowering the barriers to effective data federation, Perspectives empowers organizations to harness their data's full potential, enhancing decision-making, operational efficiency, and strategic agility.

Use cases and applications

Data federation's adaptability and robustness render it invaluable across numerous scenarios, extending from the seamless integration of enterprise data systems to bolstering advanced analytics and overseeing cloud-based data storages. Below are expanded insights into some pivotal use cases:

Integrating Enterprise Data Across Systems

Data federation amalgamates data dispersed across diverse enterprise systems, such as CRM (Customer Relationship Management), ERP (Enterprise Resource Planning), and SCM (Supply Chain Management) systems. This unified data approach provides organizations with a holistic view of their operational, customer interaction, and supply chain activities, eliminating data silos, facilitating more unified strategies and enhancing operational efficiencies. This integration is pivotal for organizations aiming to leverage their collective data for comprehensive insights, driving strategic decisions and operational improvements.

Empowering Business Intelligence and Reporting

Data federation significantly enhances business intelligence (BI) and reporting capabilities by aggregating data from multiple sources into a single, virtual repository. This enables more comprehensive analytics, richer insights, and better-informed decisions without the latency associated with traditional data warehousing solutions.

Managing Data in Cloud Environments

With the increasing adoption of cloud computing, data federation plays a crucial role in managing data across hybrid and multi-cloud environments. It allows organizations to seamlessly access and integrate data stored in different cloud services and on-premises databases, facilitating a flexible and scalable data management strategy that supports digital transformation.

Data federation versus data integration

Data federation and data integration aim to provide a cohesive view of data from multiple sources. With Perspectives, they go hand in hand and you get the best of both worlds.

Data Federation

Data federation with Perspectives employs a virtual approach, leaving the data in its original sources and using software to aggregate multiple data sources at the same time and bind data from those multiple data sources into a single Tom Sawyer model. This method offers several advantages, including reduced data redundancy, minimized storage costs, and the ability to aggregate, present and query data in real-time or near-real-time.

Data Integration

Data integration with Perspectives involves utilizing purpose-built connectors to connect to a data source, extract the schema, bind data from that data source to the Tom Sawyer model, and commit changes back to the data source. This eliminates the need for a physical repository, such as a data warehouse or data lake, which can be resource-intensive, requiring significant effort in data cleaning, transformation, storage management, and cost.

TECHNOLOGY

SALES

Copyright © 2026 Tom Sawyer Software.

All rights reserved. | Terms of Use | Privacy Policy

Copyright © 2026 Tom Sawyer Software. All rights reserved. | Terms of Use | Privacy Policy